Patents & Research Publications

Framework for Appraising Scrum Projects:

Evaluating Performance in Agile Teams

(Patent Application Number: 20130189659 Date of Filing: 30th July 2012)

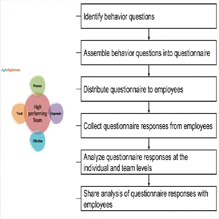

This patent application introduces a methodology for evaluating and computing appraisal

information within agile teams,

specifically tailored for Scrum projects. The framework utilizes a survey-based approach, where

a questionnaire is

published on the web, allowing team members to respond using their client devices. The survey

questionnaire is designed

to align with agile values and principles, both at the team and individual level. The

questionnaire captures both

Self-evaluation responses and Peer evaluation responses. The framework then computes performance

indicators by

aggregating the peer responses about each team member and by taking into account of discrepancy

between an individual's

self-perception of their performance and the peer responses about that individual.

Framework for Appraising Scrum Projects:

Evaluating Performance in Agile Teams

(Patent Application Number: 20130189659 Date of Filing: 30th July 2012)

This patent application introduces a methodology for evaluating and computing appraisal

information within agile teams,

specifically tailored for Scrum projects. The framework utilizes a survey-based approach, where

a questionnaire is

published on the web, allowing team members to respond using their client devices. The survey

questionnaire is designed

to align with agile values and principles, both at the team and individual level. The

questionnaire captures both

Self-evaluation responses and Peer evaluation responses. The framework then computes performance

indicators by

aggregating the peer responses about each team member and by taking into account of discrepancy

between an individual's

self-perception of their performance and the peer responses about that individual.

Data Center Resource Simulator

(Application No. 20120060167, Published on: 08th March 2012)

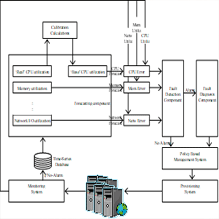

Design of high performance, power-efficient and dependable cluster based data centers has become

an important issue, so with the increasing use of data centers in almost

every sector of our society: academic institutions, government agencies and a myriad of business

enterprises. Commercial companies such as Google, Amazon, Akamai, AOL and

Microsoft use thousands to millions of servers in a Data Center environment to handle high

volume of traffic for providing customized (24x7) service.

Data Center Resource Simulator

(Application No. 20120060167, Published on: 08th March 2012)

Design of high performance, power-efficient and dependable cluster based data centers has become

an important issue, so with the increasing use of data centers in almost

every sector of our society: academic institutions, government agencies and a myriad of business

enterprises. Commercial companies such as Google, Amazon, Akamai, AOL and

Microsoft use thousands to millions of servers in a Data Center environment to handle high

volume of traffic for providing customized (24x7) service.

Detecting And Diagnosing Misbehaving Applications in Virtualized

Computing Systemis

(Application No. 20120266026 Published on 18th October 2012)

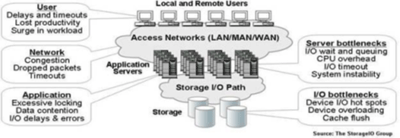

Misbehaving applications may be detected by monitoring system resource utilization in a

virtualized computer system. Utilization may beforecasted based on historical utilization data for the system resources when

the application is known to be behaving normally. When the monitored utilization of system resources deviates from the

forecasted utilization, an alert may be generated. When the alert is generated, system resources allocated to the

application may be increased or decreased to prevent abnormal behavior in the virtualized computer system executing to

misbehaving application.

Detecting And Diagnosing Misbehaving Applications in Virtualized

Computing Systemis

(Application No. 20120266026 Published on 18th October 2012)

Misbehaving applications may be detected by monitoring system resource utilization in a

virtualized computer system. Utilization may beforecasted based on historical utilization data for the system resources when

the application is known to be behaving normally. When the monitored utilization of system resources deviates from the

forecasted utilization, an alert may be generated. When the alert is generated, system resources allocated to the

application may be increased or decreased to prevent abnormal behavior in the virtualized computer system executing to

misbehaving application.

The Anyplace 4.0 IoT Localization Architecture

Internet of Things (IoT)refers to a large number of physicaldevices being connected to the Internet that are able to see,hear, think, perform tasks as well as communicate with eachother using open protocols

Indoor Geo-Localization Systemfor Manufacturing Shopfloor and Selection of Appropriate Product

In a connected world where machinery, plant, parts, inventory, vehicles and people are ever connected, knowing their positions invariably becomes a key business aspect. GPS devices are commonly used for outdoor localization but sparse availability of GPS sig-nals in indoor environment poses challenges for its indoor usage. In this study, a man-ufacturing company with multiple factories and offices around the world wanted to scan the market to identify a geo-localization server for localizing IOT devices in indoor environments. Implementation cost and maintenance were to play a vital role in the solution identification.

QUOTALOGIC: a DSS for reservation quota allocation to maximize capacity utilization of coaching trains

In long distance passenger trains with multiple stops, passengers can use the train for travelling between any station pair. If the same seat can be utilized for multiple bookings, that increases the utilization. For this purpose, inventory of seats/ berths is divided between different station quotas. Improper quota allocation results in passenger being turned down while seats remain vacant. Following business problem is solved by our innovative product QUOTALOGIC

Introduction To Operations Research

Introduction to Operations

Research has been the classic text on operations research. While

building on the classic

strengths of the text, the author continues to find new ways to make the text current and

relevant to students.Since it is a text which covers the subject holistically, the adaptation is

the application of the concepts in examples

and cases.

Introduction to Operations

Research has been the classic text on operations research. While

building on the classic

strengths of the text, the author continues to find new ways to make the text current and

relevant to students.Since it is a text which covers the subject holistically, the adaptation is

the application of the concepts in examples

and cases.

Business Applications of Operations Research

Operations Research is a bouquet

of mathematical techniques which have evolved over the last six

decades, to improve the

process of business decision making. Operations Research offers tools to optimize and find the

best solutions to myriad

decisions that managers have to take in their day to day operations or while carrying out

strategic planning. Today,

with the advent of operations research software, these tools can be applied by managers even

without any knowledge of

the mathematical techniques that underlie the solution procedures.

Operations Research is a bouquet

of mathematical techniques which have evolved over the last six

decades, to improve the

process of business decision making. Operations Research offers tools to optimize and find the

best solutions to myriad

decisions that managers have to take in their day to day operations or while carrying out

strategic planning. Today,

with the advent of operations research software, these tools can be applied by managers even

without any knowledge of

the mathematical techniques that underlie the solution procedures.

Optimal Design of Timetables For Large Railways

This monograph presents a

framework for optimum design of timetables to maximise schedule

robustness and minimise

resource deployment, especially for large railway networks, wherein the problem has been

formulated as a multi-objective

non-linear model. The monograph's contribution is the formulation of methods to reduce the

problem size to

computationally manageable proportions, and thus enable planners to undertake network-wide

optimisation studies. The

monograph demonstrates that the design of timetables can be spread over a canvas incorporating

not only over the entire

railway network, thus enabling study of the mutual interactions across the network, but also

involving the optimisation

of crew and rolling stock in the purview of the model.

This monograph presents a

framework for optimum design of timetables to maximise schedule

robustness and minimise

resource deployment, especially for large railway networks, wherein the problem has been

formulated as a multi-objective

non-linear model. The monograph's contribution is the formulation of methods to reduce the

problem size to

computationally manageable proportions, and thus enable planners to undertake network-wide

optimisation studies. The

monograph demonstrates that the design of timetables can be spread over a canvas incorporating

not only over the entire

railway network, thus enabling study of the mutual interactions across the network, but also

involving the optimisation

of crew and rolling stock in the purview of the model.

Software Project Characteristics And Their Measures: Towards A Comprehensive Framework

We present a comprehensive list of project characteristics based on research conducted in one of the largest software development and IT services organizations which has hundreds of concurrent offshore outsourcing software projects at any time. This list of characteristics is based on data from three sources: a) existing literature, b) internal company knowledge based on the experience of past projects and c) opinion of industry experts.

Composite Dispatching Rules to Schedule Passenger-coaches in Indian Railways

We propose composite dispatching rules for scheduling passenger-coaches for Rajdhani services in Indian Railways; these are passenger services from different state capitals to the federal capital city of New Delhi. Effectiveness of several dispatching rules are compared with existing rules using percentage utilization and number of passenger-coaches.

Application of Value Based Requirement Prioritization in a Banking Product implementation

This paper describes the need for Value Based Requirement Prioritization (VBRP) in Core Banking transformation programs. We describe the VBRP tool selected for this purpose and how it was customized for use in a large product implementation in a bank.

Structured Method for Business Process Improvement

Based on the consulting experience in several process improvement projects and existing literature in the area the authors present a structured method to improve an existing process. This removes the ambiguity in identifying the data and information required to analyze a business process and in selection of suitable process improvement pattern.

Visibility of End-to-End business process in case of execution in multiple applications

It is observed that there are processes with multiple sub-processes which are being executed by multiple applications and their monitoring becomes difficult without creating a single end-to-end process. We propose to use transaction logs of these applications along with correlation IDs to identify and connect particular instances of activity execution to a particular transaction execution in the end-to-end process and to use this data for monitoring processes.

Concept of a system for Addressing Bad Publicity in Social Media Using Knowledge in Business Process Models

In this work in progress research paper we describe a concept of a computerized system which can help in addressing the issue of bad publicity on blogs posted on platforms such as tumblr or wordpress, twitter, facebook and/or other public internet forums such as CNET. There are three parts to solve the problem. First, identifying and searching the web for such comments and creating a bag of words from every such comment. Second, creating an index of words occurring on process models and assign them weightage in different process models based on their frequency of occurrence. Third, to create an association between the bag of words derived from the comment and the process models using the index of words.

BPO through the BPM Lens: A Case study

Process Outsourcing industry, a multi-billion dollar market, is a highly competitive area with intense competition among companies across outsourcing destinations. After the initial cost advantages, Business Process Outsourcing (BPO) clients increasingly expect innovation and improved performance which acts as a driver for BPO providers to adopt different aspects of BPM. Most of the literature on BPO and BPM focuses on the outsourcing organization’s point of view. While BPOs use six sigma techniques and IT for improving their performance the adoption of BPM by a BPO has not been analyzed from a holistic perspective.

Process Improvement by Simplification of Policy, and Procedure and Alignment of Organizational Structure

In this paper we analyze a process improvement project in detail and propose a generic framework for process improvement through process simplification. It involves simplification of relevant policies, simplification of procedures and alignment of process execution teams along policy, process and systems.

Discrete Event Monte-Carlo Simulation Based Decision Support System for Business Process Management

This paper presents a discrete event Monte-Carlo simulation based decision support system (DSS), which helps in managing business processes. Process managers use available information on work load, resource availability, task time, etc. and use their experience to predict the performance of the process and take corrective action, if required. We describe here a method and a system which takes available information about work load, and resource availability, to predict near future system performance using discrete event simulation, and compares the expected performance with desired service level and alerts the manager to take corrective action.

Discrete Event Monte-Carlo Simulation of Business Process for Capacity Planning: A Case Study

Strategic capacity planning is generally done using static mathematical analysis. Simulation of operational scenarios during capacity planning provides more insights into the real system behavior. This ensures better preparedness to handle operations. This case study demonstrates the benefits of discrete event Monte-Carlo simulation of business process over simple mathematical analysis for capacity planning.

Knowledge Management Practices in Indian Software Development Companies: Findings from an Exploratory Study,

Knowledge management (KM) is becoming an important management responsibility as organizations increasingly invest significant information technology (IT) resources to support acquisition, storage, sharing, and retrieval of knowledge. Furthermore, KM plays a critical role in organizations that rely primarily on intellectual capital, such as software development companies. In this paper, we report the findings of an exploratory study where we investigate the KM practices of eight leading software consultancy companies in India and compare our findings with results from a similar study by Alavi and Leidner (1999).